This blog runs on Ghost. I like Ghost quite a bit. Sadly, I was often remiss in updating to the latest version. This weekend I finally got to the todo item of updating this site.

To make things easier on myself in the future, I decided to use Docker and ECS to deploy the site. This decision was largely fueled by the availability of official Ghost container on Docker Hub and the ease of updating versions with Docker and ECS.

Before we get started with ECS I'm going to get things running locally to ensure that everything is peachy.

Locally via Docker

I'm assuming that you have some baseline understanding of Docker. Beyond the obvious, this means you're familiar with mounting volumes, configuring container links, and exposing ports.

Setup

The first thing I did to build out this site on ECS was create a local Docker version of the pieces. This consists of two containers:

- Ghost - the blog code

- NGINX - reverse proxy in-front of Node process

The first step was creating a few directories on my MacBook for providing information to the containers. I create two directories: ghost and nginx.

mkdir ~/Documents/blog/{ghost,nginx}

I then grabbed all of the user data for my blog and copied it to the ghost directory. I now have all my content from data, images, and themes and the config.js file living in the ghost directory.

Ghost Container

With everything set up, I can run Ghost as a container with the following Docker run command:

docker run -d --name ghost \

-v ~/Documents/blog/ghost:/var/lib/ghost \

-p 2368:2368 \

-e NODE_ENV=production \

ghost

A few things are going on here:

-dexecutes the container in daemon mode--name ghostnames the containerghostfor easy reference later-v ~/Documents/blog/ghost:/var/lib/ghostmounts the local path of my blog content into/var/lib/ghoston the container. This is where the container expects the blog data to live.-p 2368:2368maps the port2368on the host to the port2368on the container-e NODE_ENV=productionsets theNODE_ENVenvironment variable toproductionso that Ghost runs in production mode

With the container running, I can access the blog at http://localhost:2368!

Next up is getting NGINX running to perform request forwarding.

NGINX Container

We start configuring NGINX by creating a configuration file for the blog in our previously created folder:

vim ~/Documents/blog/nginx/blog.conf

Creating a config for NGINX that will map to the Ghost container looks like this:

## derpturkey.com

server {

listen 80;

server_name derpturkey.com;

## Forward requests

location / {

proxy_pass http://ghost:2368;

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

}

}

The above config listens on port 80 for requests to the derpturkey.com domain. These requests are forwarded to the host named ghost on on port 2368.

With this all setup, we can now start the NGINX container with the following run command:

docker run -d --name nginx \

--link ghost:ghost \

-v ~/Documents/blog/nginx/blog.conf:/etc/nginx/conf.d/blog.conf \

-p 80:80 \

nginx

A few things going on here:

-druns the container in daemon mode--name nginxnames the container nginx--link ghost:ghostcreates a container link for the container namedghostand aliases it asghostinside the NGINX container. This means that the NGINX container can now find the ghost container asghost.-v ~/Documents/blog/nginx/blog.conf:/etc/nginx/conf.d/blog.confcreates a volume that mounts theblog.confon the host to the path where enabled configurations live inside the NGINX container.-p 80:80maps port80on the host to port80inside the container. Note: this requires that you don't have anything else bound to port 80 on your machine.

The last thing was adding a host entry on my machine so that NGIX works correctly (it's expected the host derpturkey.com

sudo vim /etc/hosts

127.0.0.1 derpturkey.com

And voila, it works! Then I deleted all that hard work because it's time for ECS.

Deploying via ECS

Elastic Container Services is Amazon's play in the container management game. I'll walk through a few concepts before going over how to translate the above into ECS.

Clusteris really the name for an ECS deploymentECS Instancesare the actual servers that containers run on. They are specially configured EC2 instances that are running the ECS agent.Tasksare most easily comparable to a Docker Compose file. They allow you to define a group of containers and the run configuration for those containers.Servicesare the deployments ofTasksinto theCluster.

Those definitions out of the way...

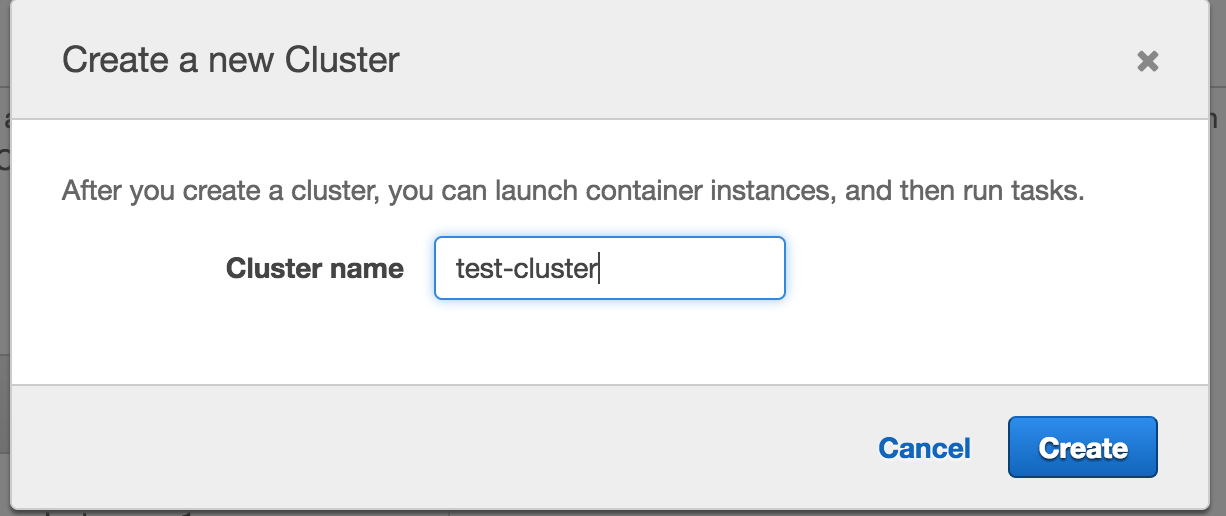

Step 1: Create the Cluster

The first step is creating the Cluster. I did this by navigating to the ECS Management page in Amazon and creating a new Cluster. Basically, you just name the cluster.

Step 2: Create ECS Instances

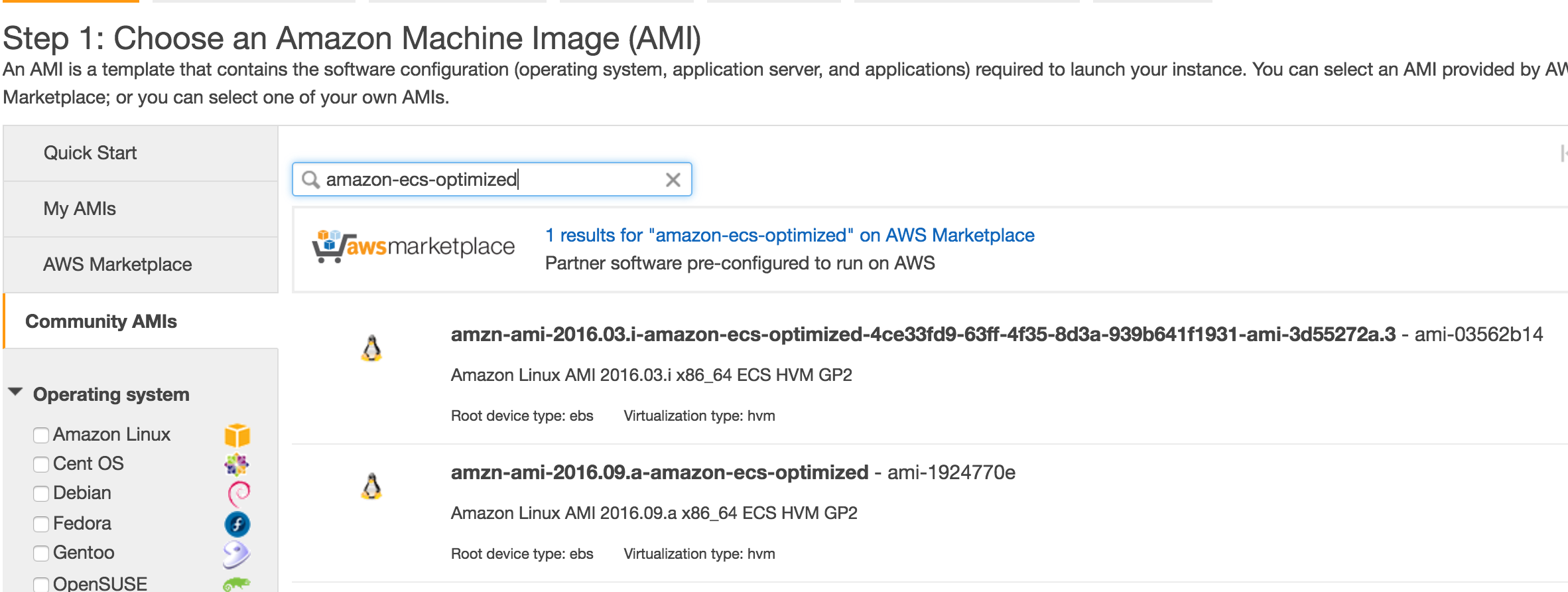

Once I had the cluster created, I had to create some EC2 Instances to join the cluster. Amazon provides decent information on creating ECS instances but I'll walk you through the basics.

The easiest way is to search the Community AMIs for amazon-ecs-optimized. This will provide a list AMIs from Amazon that work with ECS. At the time of this writing, amzn-ami-2016.09.a-amazon-ecs-optimized was the most recent instance.

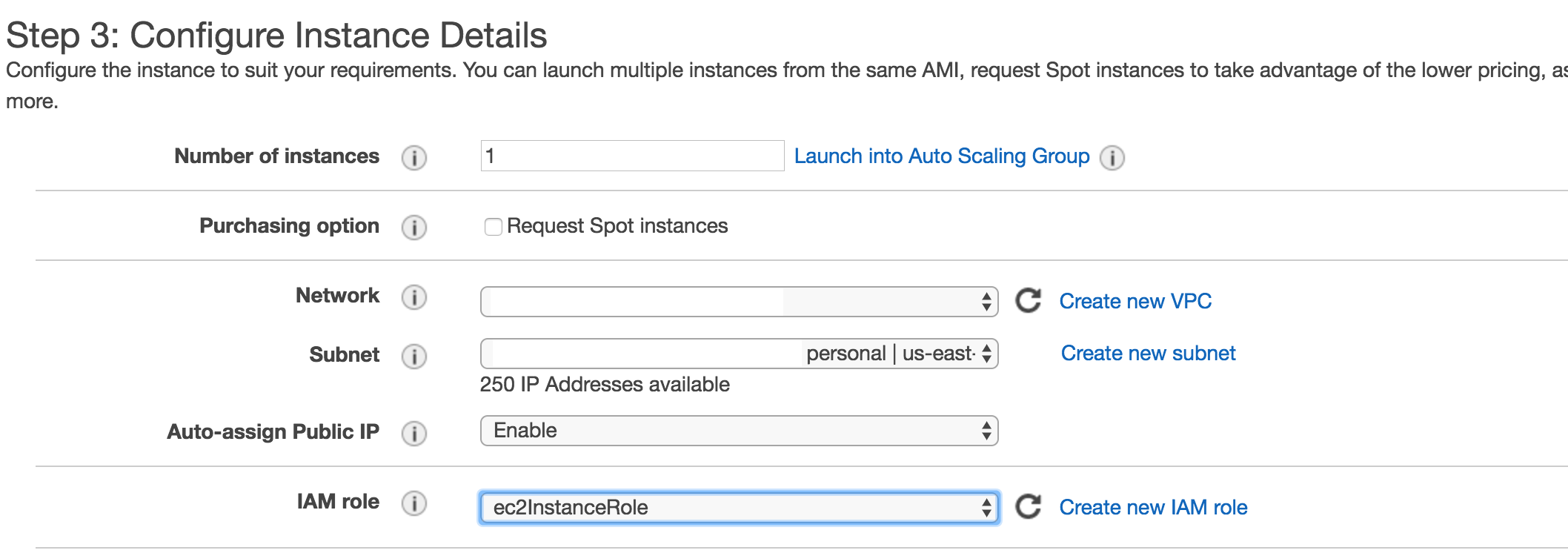

The next step is configuring the instance. This involves adding it to the VPC, and giving it a public IP address. A public IP address is needed to connect with the ECS service.

Additionally, the instance will need to be launched with the IAM Role of ec2InstanceRole. More information about setting up this role can be found here.

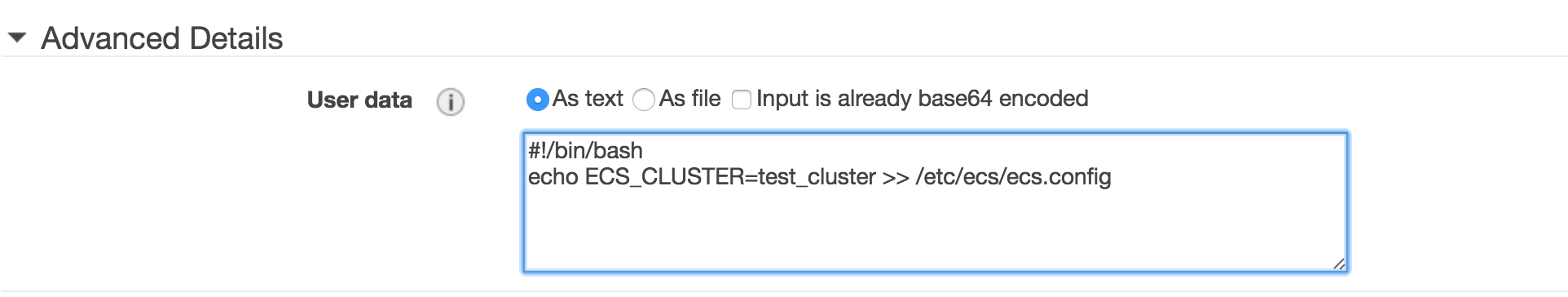

Finally, you need to tell the EC2 instance to join the cluster. This can be done in the Advanced section by adding a script block to configure the ECS agent. You simply provide the name of the cluster you previously created as the ECS_CLUSTER key/value.

Complete your instance creation process and wait a few minutes for the instance to start. It should appear in the cluster after it has started.

Step 3: Create the Task

Next is actually mapping the Docker run commands previously executed into tasks. Start by creating a new task definition.

Volumes

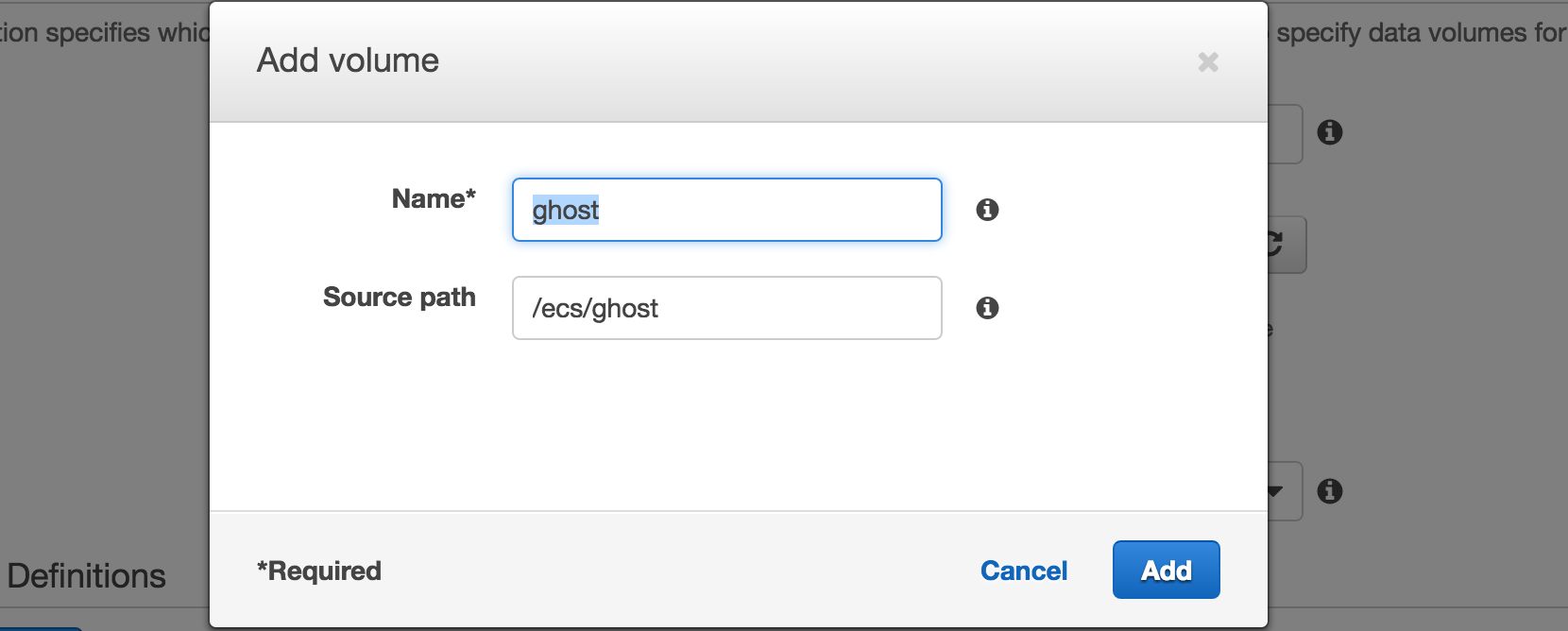

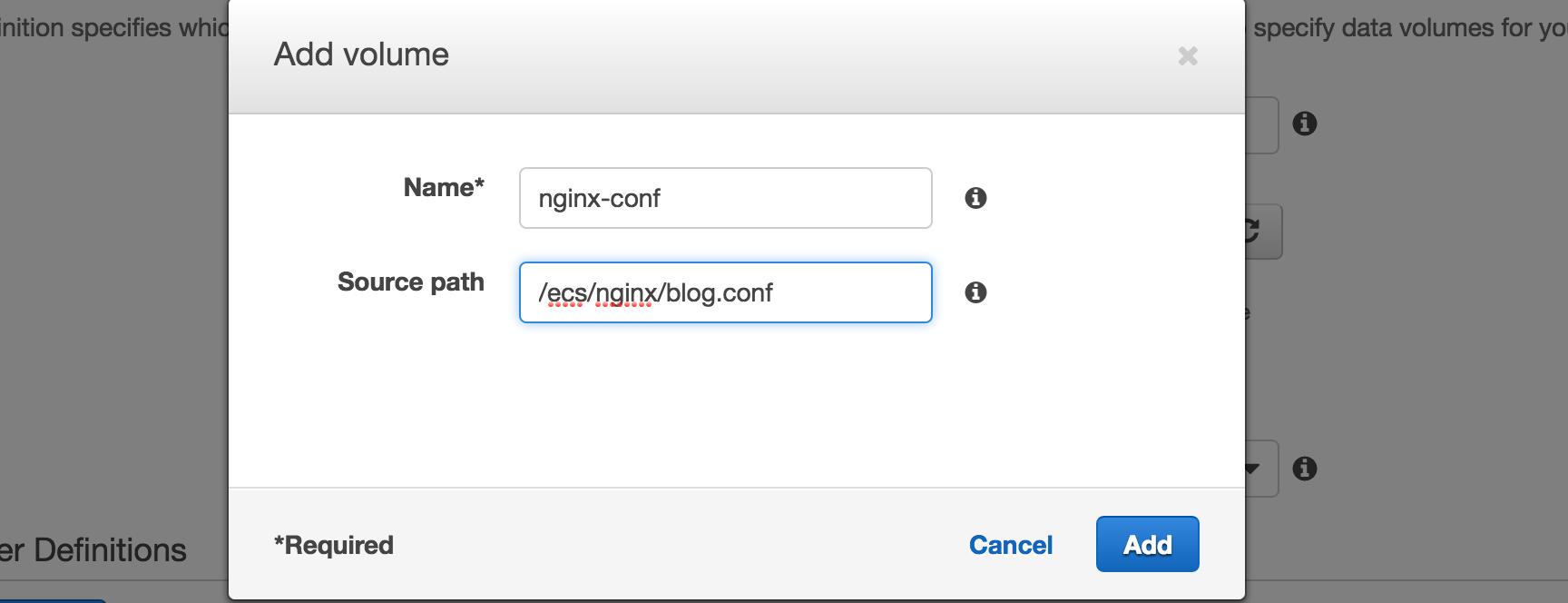

Before I create the container definitions, I want to first create two volumes that correspond to the folders we created on our local machine.

The two volumes define the path to the volume data on our host instance.

Important: After creating these volumes you'll want to log into the EC2 instance you previously created and create the /ecs/ghost and /ecs/nginx folders. You can put the data from your local machine into those folders after you create the folders

Ghost Container

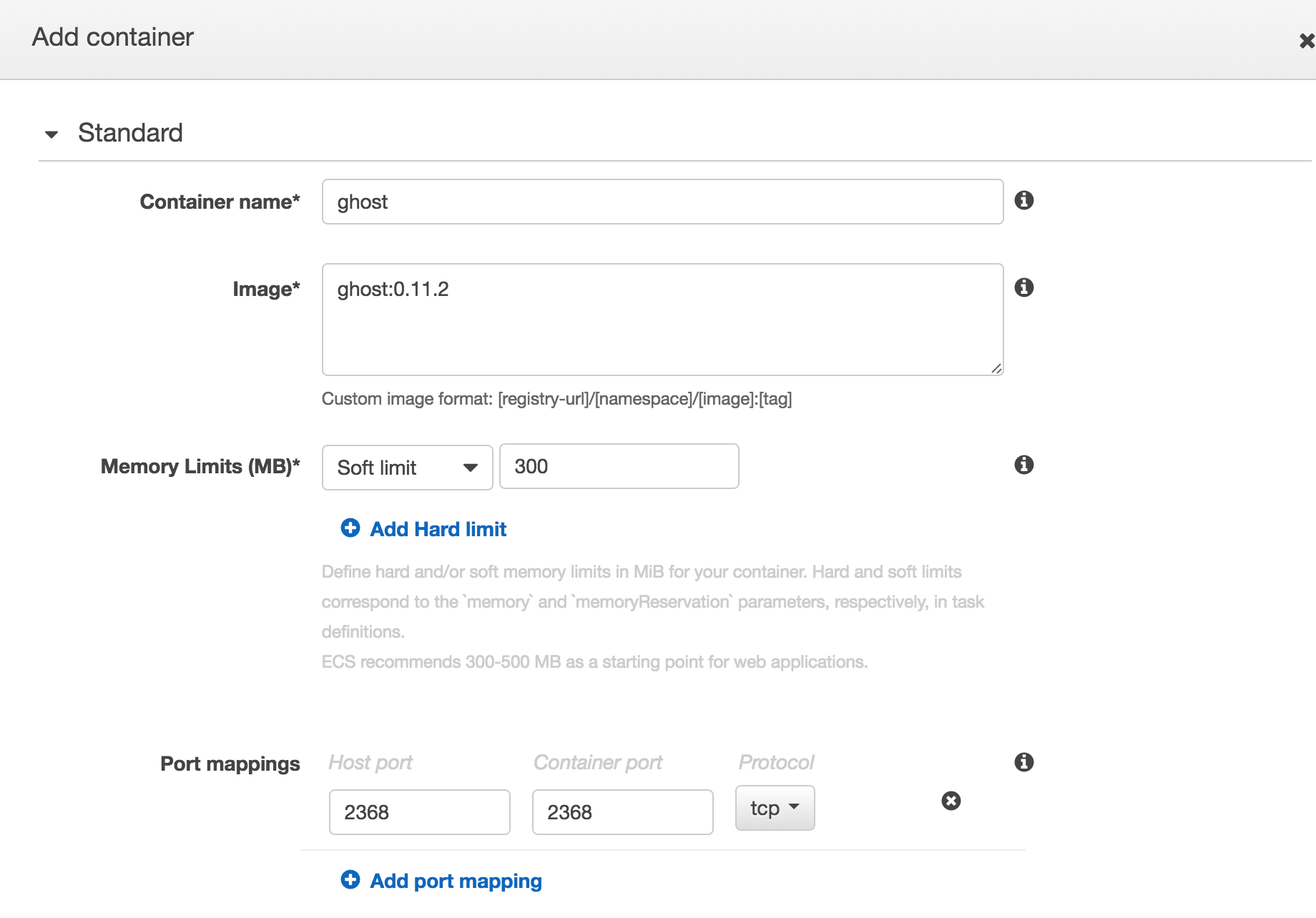

Now that I had volumes, I started by creating a container for Ghost.

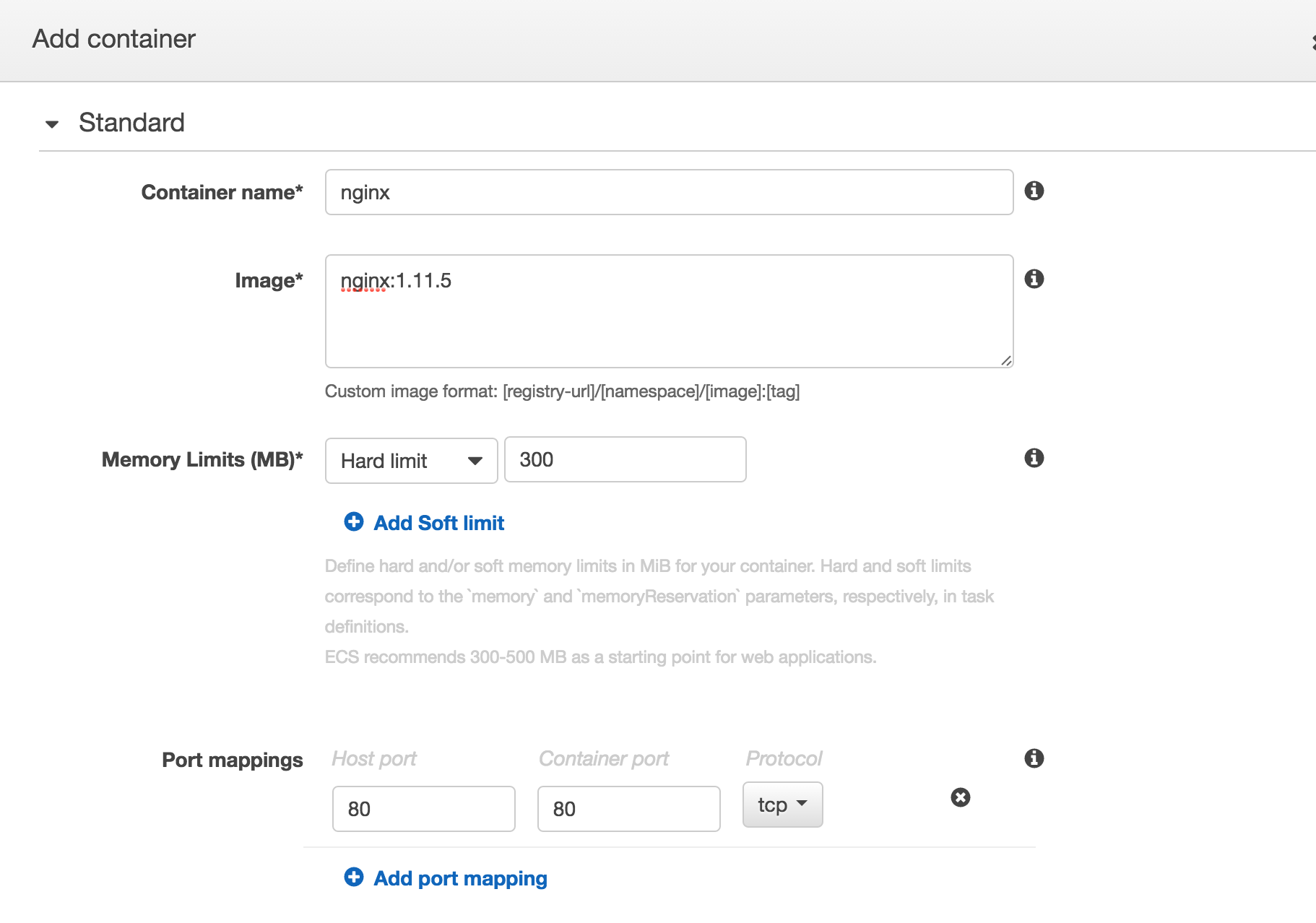

The first step is naming the container ghost and providing the image. In this case, I used the image with version tag ghost:0.11.2. Using the versioned image tag facilitates updates in the future. All you need to do to update is update the task definition with the new image version when you want to upgrade.

Additionally, I added port mappings for 2368 on the host and 2368 on the container to facilitate testing and direct connectivity.

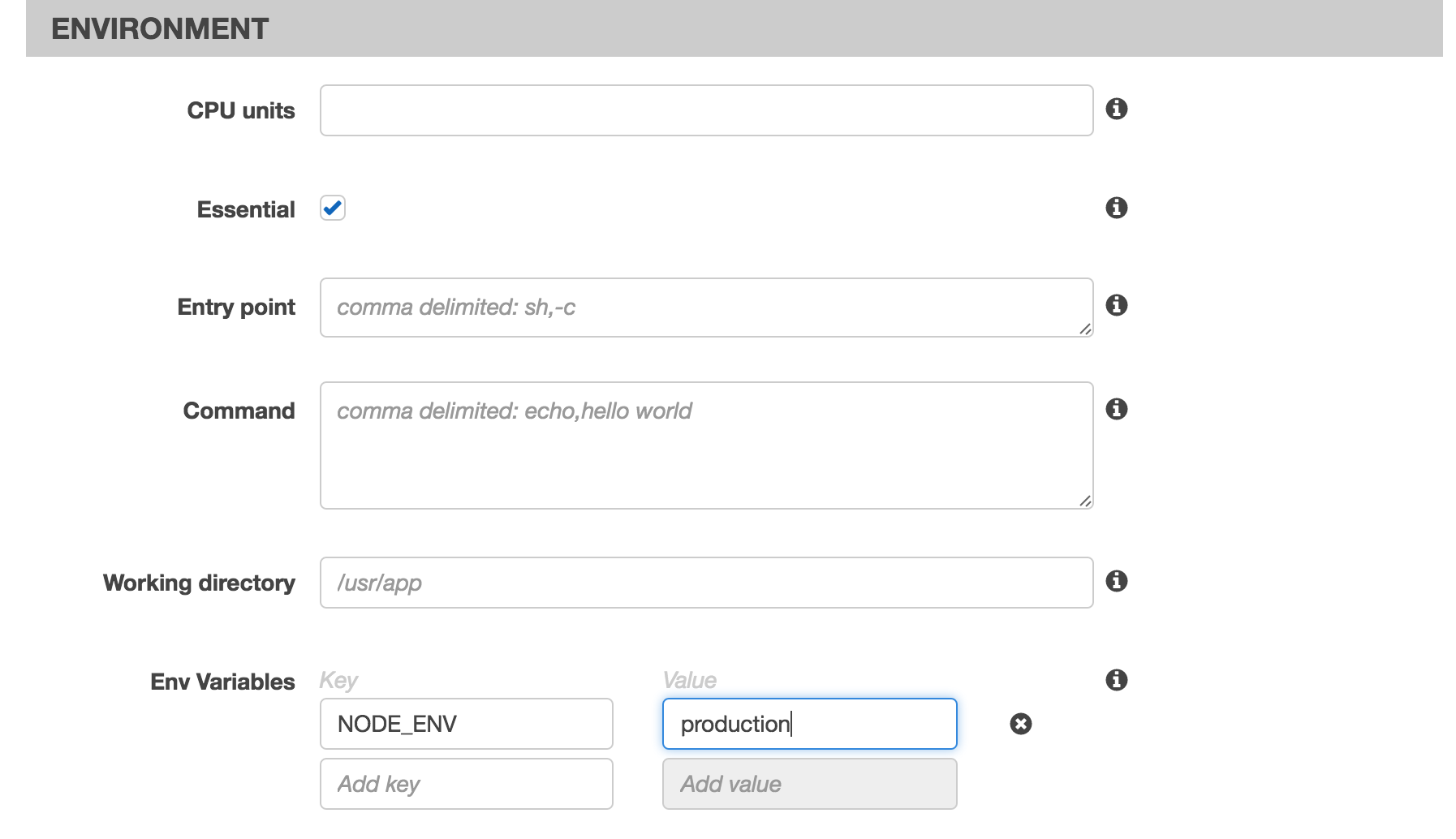

Next was adding the environment variable for NODE_ENV=production as was done in the Docker run command previously:

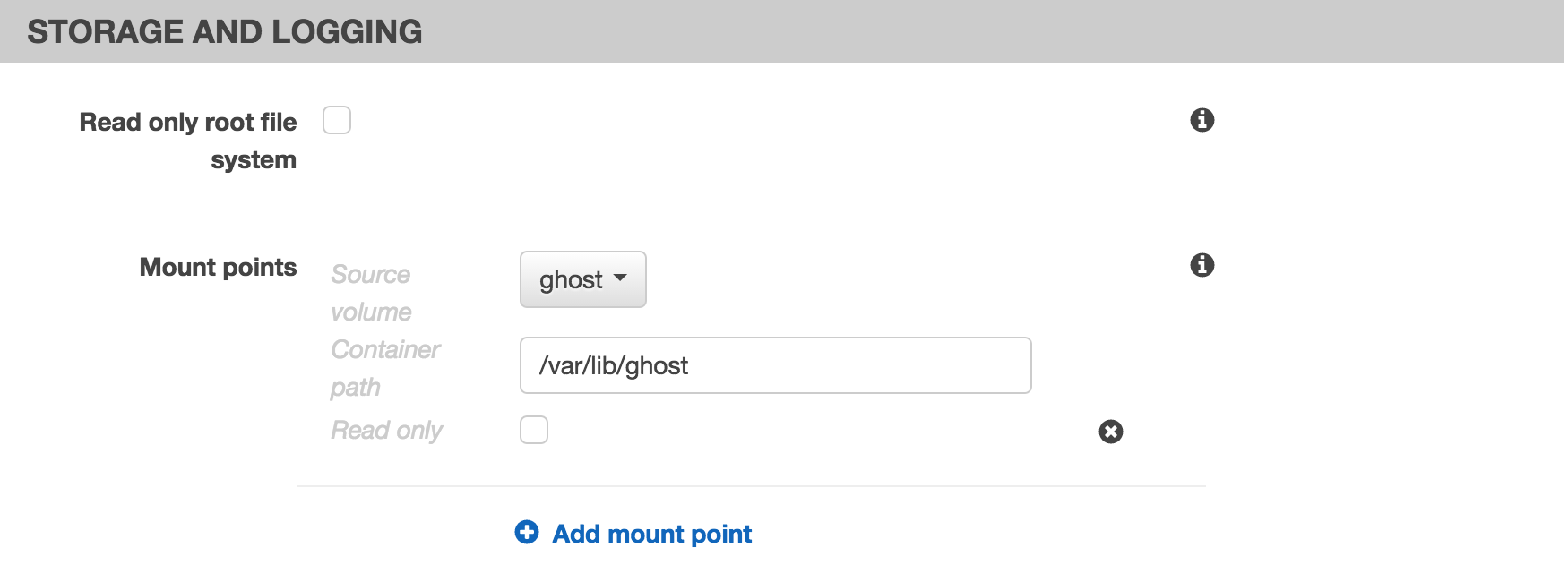

The final piece was mounting the ghost volume into the /var/lib/ghost path on the container:

At this point, the Ghost container is ready to rock.

NGINX Container

Next up is creating the NGINX container.

Start by defining the NGINX conainer and image. Again I use the versioned image to facilitate upgrades in the future.

Additionally, this container has port 80 on the host mapped to port 80 in the container.

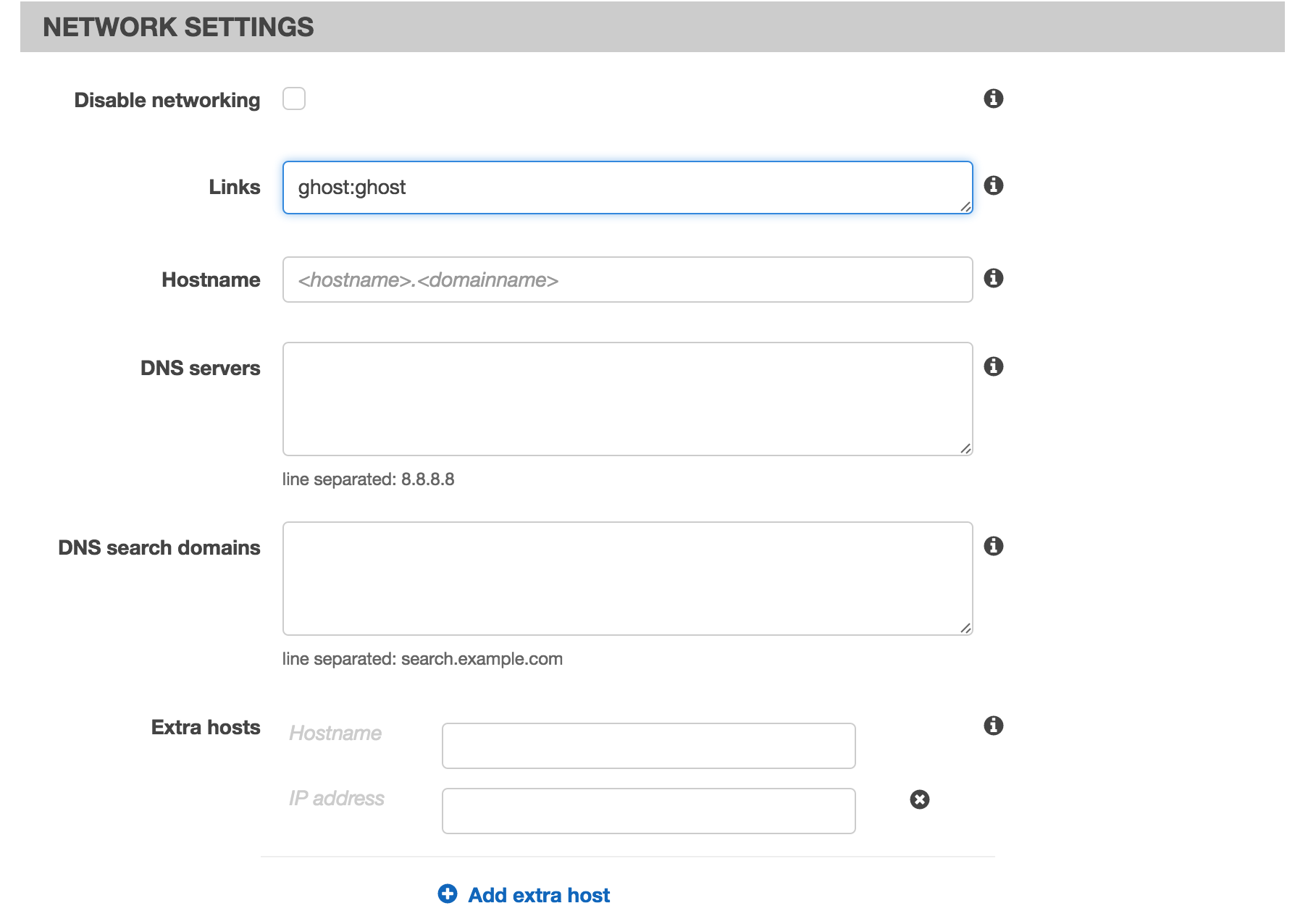

Then the container link should be created by linking the container named ghost to the local alias ghost.

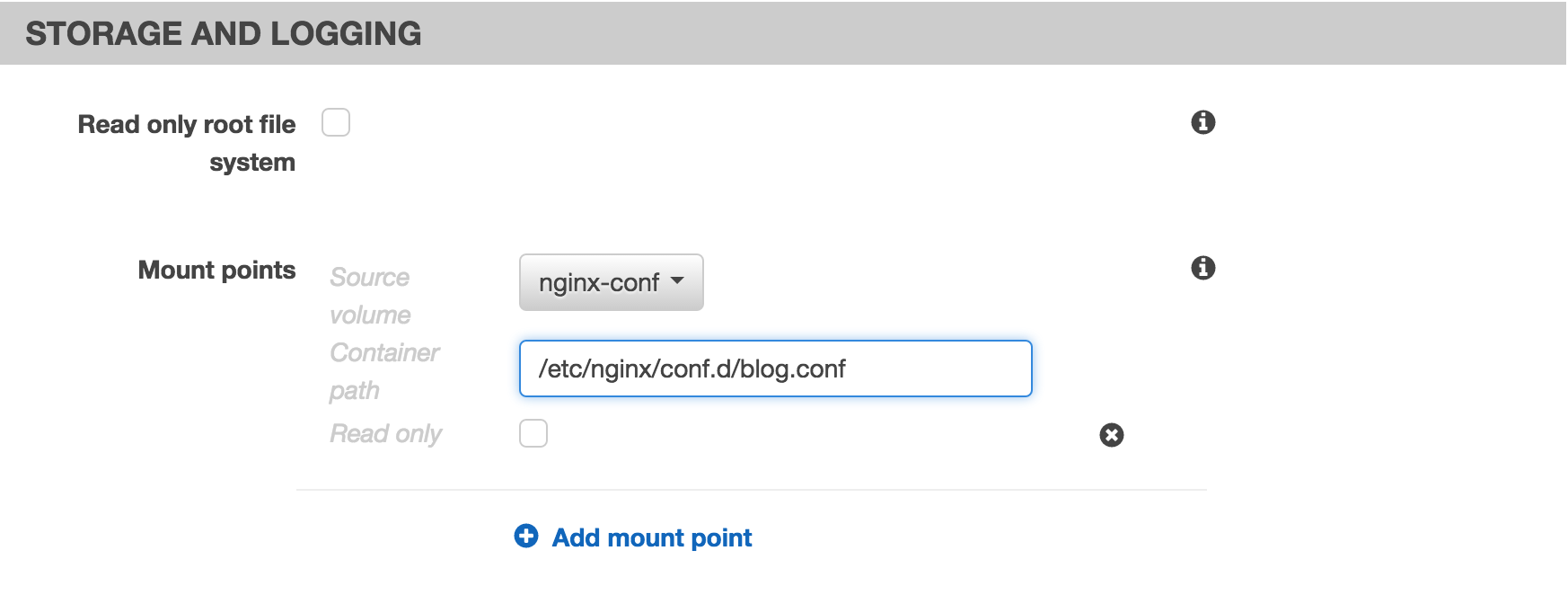

Finally, mount the configuration file that was generated in the nginx-conf volume to the required location in /etc/nginx/conf.d/blog.conf

Save your containers and your task. You're all set!

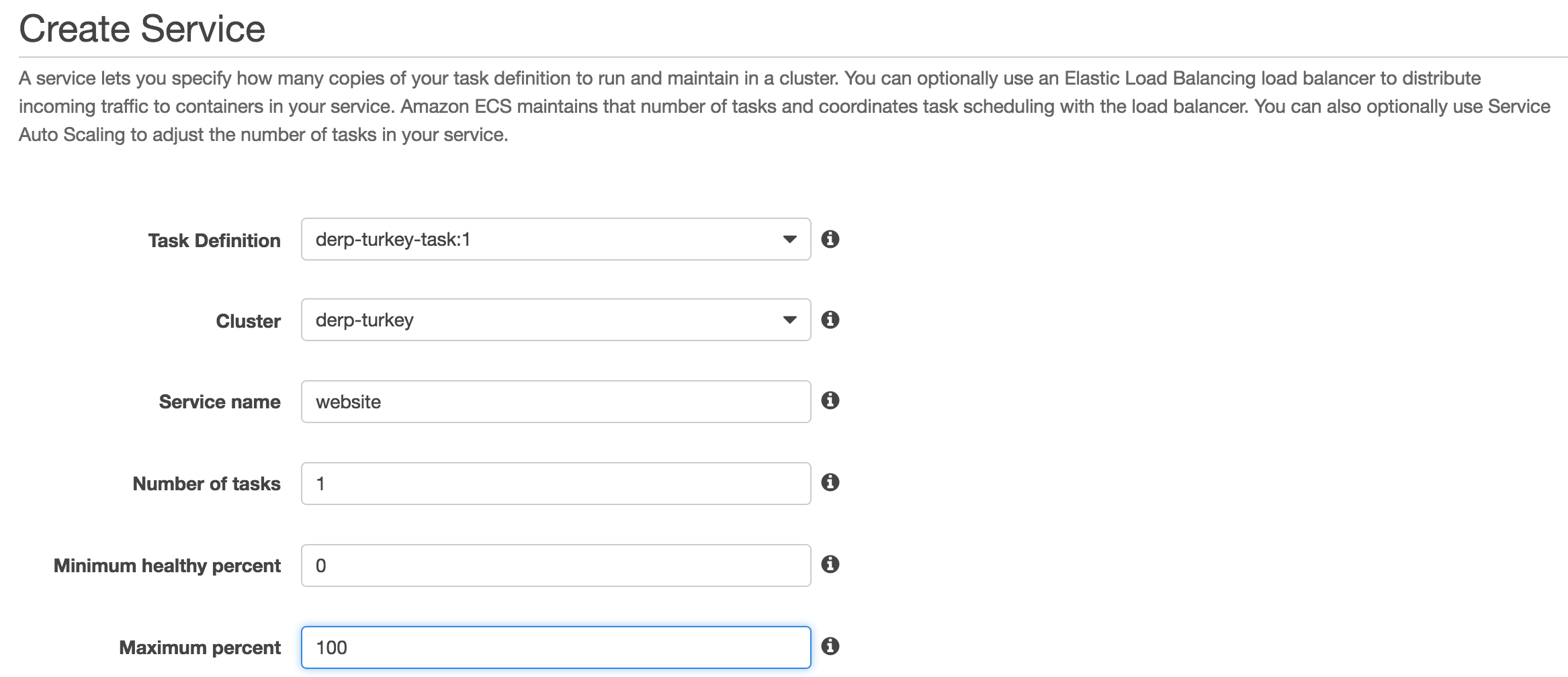

Step #4: Create the Service

The final piece of the puzzle is defining the service that executes your task on the instance. This piece is pretty easy as you basically select the Task and Cluster and then specify how many tasks you want to run.

In this case, I've also said the minimum healthy percentage is 0 and the maximum is 100. This means that when I update the service it will shut down my site for a minute while the tasks update. This is ok for this deployment because it doesn't require a zero-downtime update.

Once the service is defined, the Tasks should execute and the site will be live!

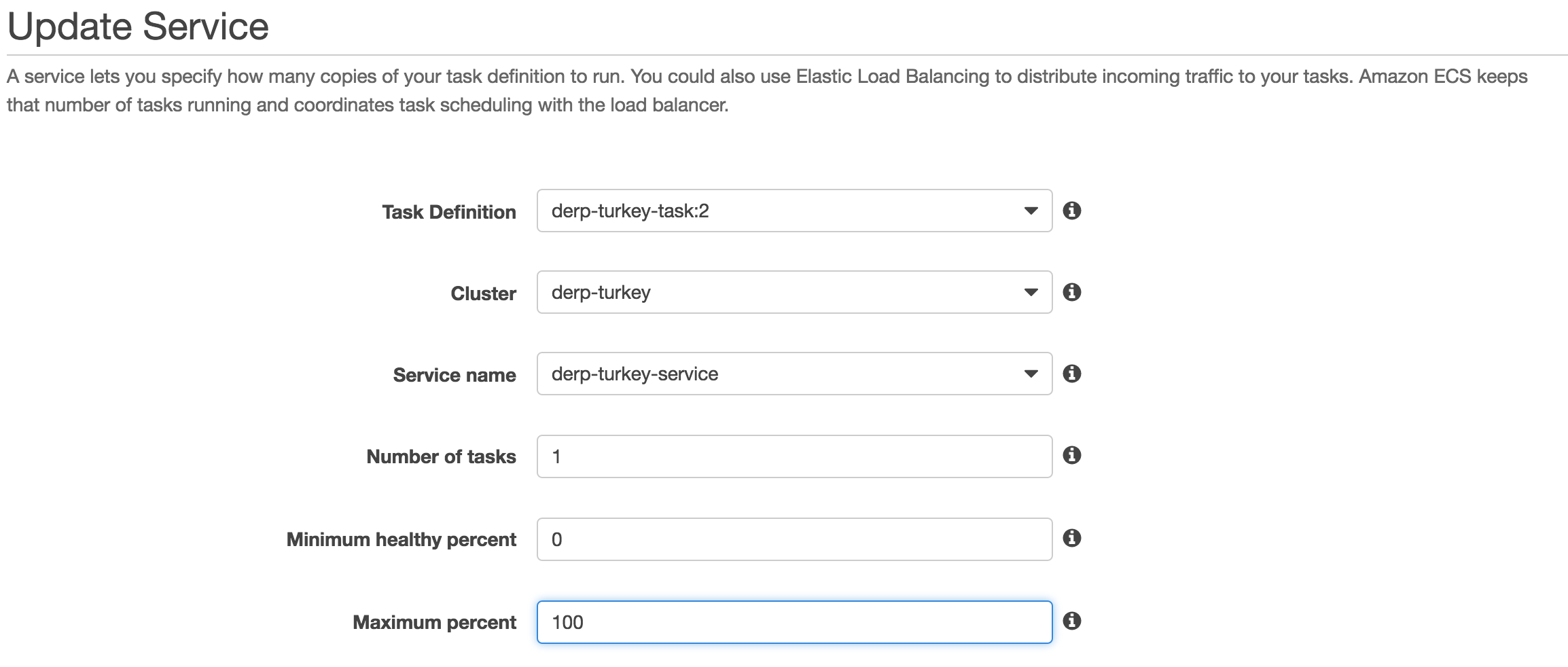

Updating the Site

The last piece is how to manage updates. Simply put, this requires creating a new revision of the Task Definition.

In the new revision of the Task Definition, you can update the image of the containers to the latest version of the Containers.

Once this is completed, you simply update the Service to point to the new version of the Task:

The cluster will automatically stop the existing containers and start the new task definition.

Conclusion

That's a lot of information and much of it requires understanding ECS and Docker. Hopefully that gave you enough of an overview to get your deployment working.